[ad_1]

Virginia Tech, a college in the USA, has revealed a report outlining potential biases within the synthetic intelligence (AI) instrument ChatGPT, suggesting variations in its outputs on environmental justice points throughout completely different counties.

In a latest report, researchers from Virginia Tech have alleged that ChatGPT has limitations in delivering area-specific data relating to environmental justice points.

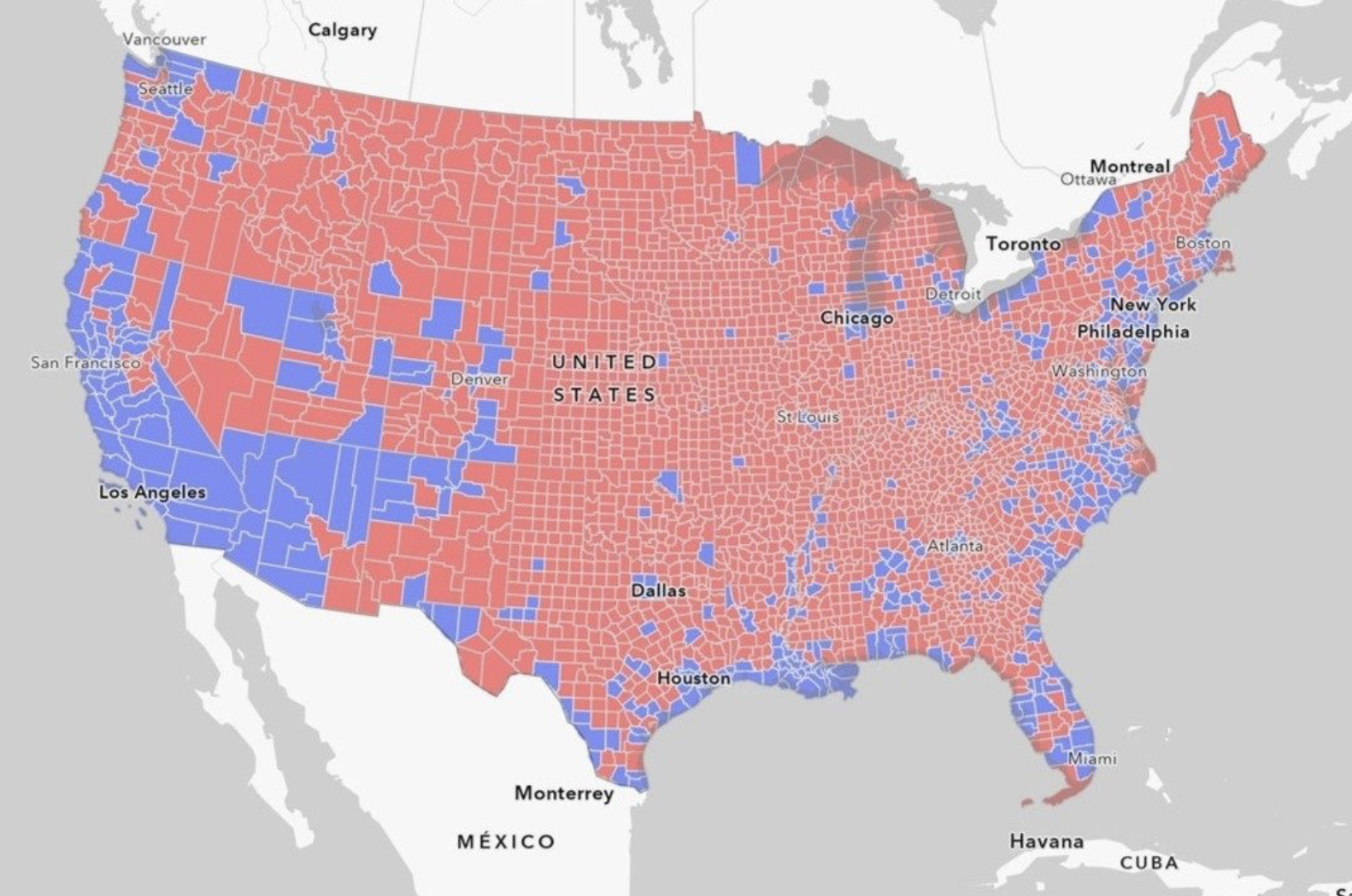

Nonetheless, the examine recognized a development indicating that the data was extra available to the bigger, densely populated states.

“In states with bigger city populations corresponding to Delaware or California, fewer than 1 p.c of the inhabitants lived in counties that can’t obtain particular data.”

In the meantime, areas with smaller populations lacked equal entry.

“In rural states corresponding to Idaho and New Hampshire, greater than 90 p.c of the inhabitants lived in counties that might not obtain local-specific data,” the report said.

It additional cited a lecturer named Kim from Virginia Tech’s Division of Geography urging the necessity for additional analysis as prejudices are being found.

“Whereas extra examine is required, our findings reveal that geographic biases at the moment exist within the ChatGPT mannequin,” Kim declared.

The analysis paper additionally included a map illustrating the extent of the U.S. inhabitants with out entry to location-specific data on environmental justice points.

Associated: ChatGPT passes neurology exam for first time

This follows latest information that students are discovering potential political biases exhibited by ChatGPT in latest instances.

On August 25, Cointelegraph reported that researchers from the UK and Brazil revealed a examine that declared giant language fashions (LLMs) like ChatGPT output text that contains errors and biases that might mislead readers and have the flexibility to advertise political biases introduced by conventional media.

Journal: Deepfake K-Pop porn, woke Grok, ‘OpenAI has a problem,’ Fetch.AI: AI Eye

[ad_2]

Source link